Trends in advanced device fabrication require combined lithography-etching multi-patterning sequences and self-aligned multi-patterning to form devices’ finest features at subwavelength dimensions.

As EUV lithography (13.5 nm) progresses to larger numerical apertures and new thin resists, new multipatterning sequences must be developed with mutually compatible resists and proximal layers to avoid resist poisoning, encourage adhesion, and enable expended materials to be easily removed without harming similar materials. Subsequent pattern transfers to form device structures by etching require mutually etch-exclusive resists, masking, and spacer materials, where each can be selectively removed by an etch process that leaves the other materials unaffected.

Materials’ resistances or susceptibilities to different etch chemistries are ultimately determined by their etching performances. Material etching rates are defined by the differences in thickness measurements made prior to and after exposure to specific wet or dry etchants for specific time intervals. Selectivity is a relative comparison of the ratio of different materials’ etching rates in an etchant where, for example, a patterning hardmask must have low selectivity compared to the underlying material that it protects.

The demand for smartphone cameras, video conferencing, surveillance and autonomous driving has fueled explosive growth of CMOS image sensor (CIS) manufacturing in the last decade. While CIS becomes an increasingly important element in the production of today’s consumer electronics, there are unique challenges in production that must be addressed. As pixel sizes shrink, we see an inverse relationship with the number of pixels in the array increasing, which presents challenges for process control of the sensor, especially as it relates to the color filter array (CFA) and on-chip lens (OCL). With the push to 1µm and below pixel sizes, the ability to find sub-micron defects and macro-level variations within the pixel array is even more important to ensure uniform and unobstructed responses throughout the active pixel sensor array (APS).

CIS is unique from other semiconductor devices because it converts light energy into electrical signals. It is manufactured on silicon wafers similar to semiconductors and follows typical back-end packaging processes such as grinding, sawing, and electrical testing. A typical CIS device has an ASP region in the center of the die with electrical I/Os (bondpads) on the periphery. Deionized water is often used to clean up mobile contamination left behind during the wafer thinning or die singulation process which has an inherent risk of staining or leaving a residue on the APS that affects the quantization of light and is considered a killer or yield limiting defect.

We depend, or hope to depend, on machines, especially computers, to do many things, from organizing our photos to parking our cars. Machines are becoming less and less “mechanical” and more and more “intelligent.” Machine learning has become a familiar phrase to many people in advanced manufacturing. The next natural question people may ask is: How do machines learn?

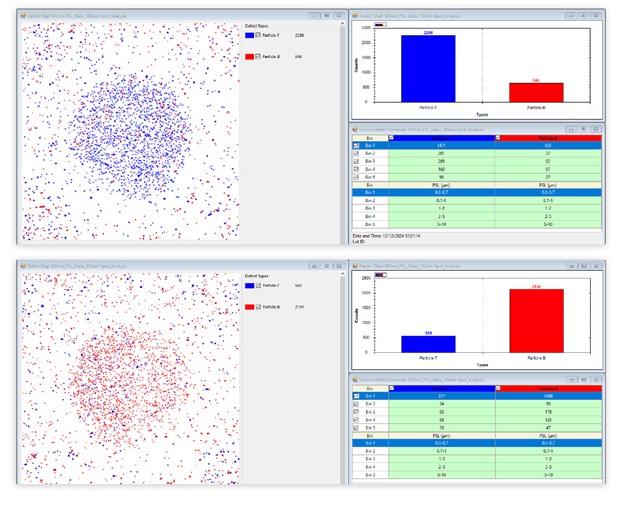

Recognizing diverse objects is a clear indicator of intelligence. Specific to semiconductors, recognizing various types of defects and categorizing them is an important task that initially was carried out solely by humans. Gradually, this classification process was automated by using computer programs running ever-evolving algorithms. Today, most defects are detected and classified by such systems in advanced facilities.

Before machine learning was widely used, there was a period when system set-up was done purely by humans. After learning about situations for a task through observation and experiments, engineers made rules and implemented them as programs for computers to run. In this implementation scenario, the machine does not learn, it just keeps repeating the process programmed, making decisions based on the embedded rules. This is a very labor-intensive approach—to extract the rules from human classifiers, create the programmatic logic to implement these rules, and to verify the result. Sometimes it’s very difficult, or impossible, to translate a decision-making process that humans do, often subconsciously, into computer language.

The multiple demands of 3D NAND to enable yield and performance increase in difficulty at each generation. First generation devices, at 24-32 layer pairs, pushed process tools to extremes, going quickly from 10:1 to 40:1 aspect ratios for today’s 64-96 pair single tier devices. The aspect ratios increased as fast as the manufacturing challenges. To continue bit density scaling, processing improved to enable multi bit storage per layer, but still even more layer pairs are needed. With increasing layer pairs, plasma etch becomes exponentially slower.

This was quickly addressed by tier stacking—splitting the massive stack into two tiers—and it will likely increase to three or more tiers in the future. The advantage of a two-tier process is that it reduces the single etch step to a more manageable process, i.e., two 64 pair etches instead of one 128, or two 96 pair instead of one 192. 256 pair, two or three tier devices, are in development now, and 384 or more expected soon. The channel hole control improved in terms of individual profile, but at the cost of increasing device integration challenges, like adding a joint into the middle of the stack. These integration challenges are confounded by combining variation from multiple process steps. There is an increasing need to identify, measure, separate, and control each of these sources of variability.

I find myself educating colleagues and customers alike about misconceptions surrounding the general field of ADC. Here are some classics:

- Automatic v. Automated Defect Classification: People frequently believe the “A” in ADC is for “automatic” and have a perception that an ADC system requires no human interaction whatsoever. The truth is that an ADC solution is no different from any other tool on the manufacturing floor. Just like an etcher or CMP system, ADC executes a recipe and produces a result. Also, like other tools, that recipe needs to be created by a tool owner and from time to time needs to be adjusted as processing changes are implemented.

- ADC is hard to configure: Setting up ADC classifier is like training your operator. Just as you would subject the human trainee to multiple examples of defects, ADC systems need a similar learning session. Again, like a human trainee you’d want to test their ability to learn and based on this test make minor adjustments if needed. Modern ADC solutions are built with an intuitive UI designed to guide you through the natural steps of collecting/managing samples, configuring image detection, setting up classifiers, and verifying the results. The biggest difference verses training a human is that you only need to train a single ADC system, not a small army of human reviewers.

- ADC classifier performance is unpredictable: A well represented set of samples, and clearly defined and visually different classes, is key to both ADC and operator. An ADC classifier is very predictable when that’s the case.

- ADC is perfect: Like a human operator, ADC is not perfect. If an operator is confused on certain samples, then ADC will most likely be, too.

You may have noticed a newcomer to the 3D InCites community. But Onto Innovation is not a new company. It is the result of the 2019 merger of equals of two successful process control solutions providers who wanted to expand to serve the semiconductor manufacturing supply chain from end to end. I recently interviewed Mike Sheaffer, senior director of corporate communications for Onto Innovation, to get the story.