Every day, consumers rely on an invisible network of specialty semiconductor devices without realizing it. The smartphone in your pocket is a good place to start. It knows when you rotate the screen thanks to MEMS sensors, and its camera delivers crisp images through advanced CMOS image sensors. Meanwhile, fast charging technology, wireless connectivity, facial recognition, and high-frequency communications all depend on specialty power devices, radio frequency (RF) filters, and photonic technologies working seamlessly behind the scenes.

However, success in every specialty device category hinges on advanced process control solutions capable of ensuring what matters most is tightly understood and controlled. This may be shape, thickness, uniformity, defects, or material properties. And it all must be done at low cost of ownership while maintaining the throughput needed for high-volume manufacturing (HVM). For device manufacturers, failure to meet requirements doesn’t just increase process complexity, it directly translates into longer ramps, missed customer commitments, and higher cost per device.

As we explored in the first two parts of this of our Specialty Surge series, these unsung heroes of modern electronics are rapidly scaling in complexity and volume, causing headaches for device manufacturers. In this final blog, we’ll turn our attention to the strategies manufacturers can employ to make that pain go away. From advanced metrology to inspection and data-rich feedback for process control, we’ll explore the capabilities enabling specialty devices to deliver the performance consumers expect at the scale manufacturers want.

Challenges Revisited

Before we go any further, let’s recap the challenges specialty device manufacturers face, separated by device type. We’ll begin with microelectromechanical systems, a.k.a. MEMS.

MEMS – MEMS include a variety of device types, including pressure sensors, gyroscopes, accelerometers, microphones, and surface acoustic wave (SAW)/bulk acoustic wave (BAW) radio frequency MEMS. These devices demand extremely tight control over specialized materials and fabrication steps, where small variations in film thickness, crystal orientation, and etch quality can directly degrade performance. Across MEMS, the core challenge involves maintaining wafer-level uniformity and precise feature profiles at scale to ensure consistent electrical, mechanical, and frequency behavior.

CMOS Image Sensors (CIS) – Modern CMOS image sensors are extremely sensitive to nanoscale process variation. As such, they require tight control of epitaxial thickness, dopant uniformity, and trench dimensions to achieve low noise and high light-capture efficiency. The challenge is amplified by the device’s stacked architecture, which demands precise metrology and inspection across pixels, CMOS circuitry, and microlens layers to maintain uniform performance.

SiC and GaN Power Devices – SiC and GaN power devices face growing manufacturing challenges with the use of 300mm wafers. These challenges include crystalline defects, epi thickness variation, CD control, and backside and particle defects, all of which can impact yield and reliability. The core challenge for manufacturers of power devices is to maintain rigorous inspection and metrology for thick, defect-prone wafers while keeping cost-of-ownership competitive with conventional silicon power devices. The inability to distinguish benign defects from true killer defects early in the process can result in unnecessary scrap, escaped reliability failures, or overly conservative screening that limits output.

Photonics and Co‑Packaged Optics (CPO) – Silicon photonics and co-packaged optics face significant manufacturing challenges due to the tight integration of lasers, waveguides, microlenses, and opto-electronic components within a single high-density module. Achieving reliable performance requires precise control of optical features, material uniformity, and multi-die assembly across multiple process domains where small variations can cascade into system-level loss.

Enabling Precision and Control in Specialty Device Manufacturing

Many of the challenges manufacturers face in the specialty segment can be addressed through capabilities integrated directly into the process flow. These capabilities enable real time adjustment and control of the fabrication process to maximize yields and improve device performance.

MEMS Devices – In RF BAW manufacturing, device performance is extremely sensitive to small variations in piezoelectric film thickness and acoustic stack uniformity. Traditionally, inline measurements help identify non-uniformity, but they do little to directly correct it. By combining high-precision, inline thickness metrology with software-driven run-to-run process control, manufacturers can move beyond passive monitoring to active performance control.

In this application, wafer-level thickness data are analyzed in real time to quantify across-wafer non-uniformity trends. That information is then fed directly into a downstream trimming process, where correction parameters are automatically adjusted on a wafer-by-wafer basis. The result is a closed-loop workflow that not only detects variation but actively compensates for it, reducing across-wafer non-uniformity by an order of magnitude. This integrated approach enables manufacturers to hit tight frequency specifications earlier in ramp, reduce binning losses, and improve RF BAW yield at production scale.

While this approach is specific to RF BAW devices, similar combinations of inline metrology and software-driven process control can be applied across other MEMS devices to stabilize critical dimensions, structural layer thickness, and structural symmetry that directly impact sensitivity, bias stability, and long‑term reliability.

CMOS Image Sensors (CIS) – Modern CIS are built on stacked architectures that combine pixel structures, CMOS circuitry, and optical elements such as microlenses. While individual inspection or metrology steps can highlight issues within a single layer, many yield and performance problems emerge only when variability compounds across the full stack.

To address this, manufacturers increasingly correlate inspection and metrology data across multiple layers of the CIS flow. Structural and defect inspection of isolation trenches, metrology of epitaxial thickness and dopant uniformity, and optical-layer inspection are combined through software to build a unified view of pixel formation. This cross-layer correlation allows engineers to trace image non-uniformity or noise back to its true origin, whether it begins in epitaxy, implantation, trench definition, or optical layers, rather than discovering the issue late in the flow. By unlocking this multi-layer insight, manufacturers can intervene earlier, preserve image quality, and protect yield across increasingly dense pixel arrays.

SiC and GaN Power Devices – Wide-bandgap power devices such as SiC and GaN introduce a unique challenge: crystalline defects originating in the substrate can propagate vertically through epitaxial layers, becoming latent or killer defects in finished devices. Identifying which defects truly matter requires more than isolated inspection. It demands full wafer visibility and data correlation across process steps.

Figure 1: 360° full wafer defect view including sub-surface defects. In this image, concentric circular fields represent the frontside, edge and bevel areas of the wafer.

In this application, manufacturers begin by capturing a 360-degree view of the wafer, inspecting the frontside, backside, and edges to fully characterize the defect population. Substrate inspection performed before epitaxial growth identifies crystal defects early, while post-epi inspection reveals which of those defects persist and propagate into active layers. Software then correlates defect maps across both stages to isolate defects that originate in the substrate and extend through the epitaxial stack.

To further distinguish benign defects from true killers, electrical characterization is applied at the substrate level to measure defect resistivity. Defects that both propagate vertically and exhibit low resistivity—an indication of a high likelihood of current leakage or shorting under operating conditions—are flagged as true killer defects. This multi-modal, correlated workflow transforms inspection from simple defect counting into root-cause analysis, enabling smarter screening decisions, higher yield, and improved long-term device reliability.

Photonics and Co-Packaged Optics (CPO) – Photonics and co-packaged optics (CPO) represent one of the most integration-dense manufacturing environments where independently fabricated components must ultimately function together as a single optical system. Defects that appear manageable at the component level can compound during assembly, leading to significant system-level optical loss.

At the component level, precision metrology and inspection are applied to each critical element. V-groove structures are measured for angle, depth, and height while simultaneously being inspected for particles or obstructions that compromise fiber alignment. Waveguides are analyzed for width, height, sidewall angle, and roughness, allowing manufacturers to correlate structural variation and local defects directly to optical loss. Microlenses are screened for deformation, cracks, or surface contamination that could degrade beam quality. Laser devices are monitored for mesa geometry and aperture dimensions, with feedback applied to etch processes, thereby stabilizing optical power and wavelength.

The challenge intensifies during final CPO assembly where multiple die are bonded, stacked, and aligned within a single module. Inspection at this stage must verify placement accuracy, bonding quality, and die planarity. Even minor warpage or misalignment can negate upstream process control. By integrating data from component-level inspection through final assembly, manufacturers gain visibility into compounding defect mechanisms and can intervene before system-level failures occur. In CPO manufacturing, this integrated approach is essential to protecting yield and optical performance.

Integrated Intelligence

Across MEMS, CIS, power, and photonic devices, specialty device manufacturers may find that the greatest value comes from integrating metrology and inspection data across tools, layers, and process steps. By correlating structural, optical, electrical, and defect information—often with 360-degree wafer visibility—manufacturers can move from reactive defect detection to proactive process control.

This integrated approach is critical to achieving the performance, yield, and reliability required for today’s most advanced and highly specialized semiconductor devices. Ultimately, manufacturers benefit most when inspection and metrology stop being isolated checkpoints and instead function as an integrated manufacturing intelligence layer that shortens ramp times, protects yield, and accelerates time to market. The challenge is no longer whether defects can be found, but whether insight can be gained early enough to act before yield, schedule, or reliability are impacted.

Christopher Haire is a marketing content specialist at Onto Innovation and a former business journalist.

You Have a Challenge? Let’s talk.

We’d love to connect with you.

Looking to learn more about our innovative solutions and capabilities? Our team of experts is ready to assist you. Reach out today and let’s starts a conversation about how we can help you achieve your goals.

Let’s Talk

"*" indicates required fields

In a world where high-bandwidth memory, GPUs, and advanced AI packages are all the rage, it is easy to forget the important role specialty devices play. These unsung heroes of modern life perform critical functions across a wide range of industries, including automotive, telecommunications, data centers, emerging AI hardware ecosystems, and consumer electronics, just like the smartphone in your hands, on your desk, or in your pocket. You know, the little pocket computer that never leaves your side.

And with new functions being added to consumer electronics with each new generation, the demand for specialty devices is on the rise. On the other end of the spectrum, manufacturers are facing an increasing number of challenges brought on by the growing complexity of these devices. Examples of this include SiC and GaN power devices, MEMS sensors, RF filters, photonics, and CMOS image sensors. We will cover all of these device types in this blog, the second in our three-part blog The Specialty Device Surge.

As discussed in part one of our series, the specialty segment is moving to larger wafer sizes. Not only does this transition promise higher throughput and improved economies of scale, it introduces new manufacturing and process control challenges, many of which are unique to each device category. Why? Each type of specialty device relies on unique materials, architectures, and process steps.

Across the industry, this complexity shows up in different ways. MEMS devices rely on a variety of materials or key processes depending on their core function: thick piezoelectric films, suspended mechanical structures, and near‑perfect plasma‑etched sidewalls, each requiring highly specialized deposition and metrology techniques. CIS manufacturing spans multiple bonded layers—pixel, CMOS, and microlens—each with its own set of defect, CD, and uniformity challenges that directly shape image quality. Power devices such as SiC and GaN introduce further hurdles: crystalline defects, trench‑shape control, epitaxial thickness uniformity, and surface‑quality demands that grow more difficult as these materials scale toward larger wafers. Photonics and co‑packaged optics push complexity even further, requiring precision across waveguides, doped films, microlenses, lasers, and the multi-die assembly process that integrates them into a single high-bandwidth module.

However, all these technologies share a simple truth: achieving high-volume production depends on advanced metrology, intelligent inspection, and tightly integrated feedback and feed‑forward control. Combined, these tools allow device makers to stay inside increasingly narrow process windows as specialty devices scale, diversify, and enter high‑volume production.

But first, let’s dive into the challenges facing specialty devices. We will begin by focusing on MEMS.

MEMS

The top five MEMS device types are pressure sensors, gyroscopes, accelerometers, microphones, and surface acoustic wave (SAW)/bulk acoustic wave (BAW) radio frequency (RF) MEMS. These are known as the Billion Dollar Club. And with good reason. Each of these devices is responsible for sales in excess of $1 billion per year. Impressive.

MEMS devices rely on either a unique material or unit process to deliver their core functionality. In the case of pressure sensors and microphones, the unique material is typically polysilicon or dielectric membranes or piezo films, which require very specific chemical or physical vapor deposition techniques to achieve the required film thickness and orientation to maximize the piezo coefficient for the material. For gyroscopes and accelerometers, the device relies on the formation of what are called interdigitated comb fingers; these capacitors rely on precise plasma etch techniques to deliver vertical side walls. However, any tilt can result in poor device operation.

Finally, there are the class of RF filter devices known as BAW devices. These devices rely on precisely deposited piezo films of either AlN or scandium doped aluminum nitride. In BAW devices, thickness and film orientation control the frequency of operation; as such, thickness uniformity across the entire wafer is essential keeping each device operating at the required frequency.

CMOS Image Sensors (CIS)

Today’s CIS devices are anywhere from 6µm to 10µm tall, with pixels in the image layer and separated from one another by approximately 100nm-wide isolation trenches.

Pixel formation relies on several dopant implant steps. The isolation trenches have sidewalls specifically engineered to increase total internal reflection, thereby increasing the light capturing efficiency of the pixel, and minimize so-called dark noise (the movement of electrons within the pixel when no light is present). The latter typically involves increasing the work function of the materials along the sidewalls of the isolation trenches.

In the formation of a pixel imager, multiple challenges, from epitaxial layer thickness and dopant uniformity metrology to isolation trench defect inspection and CD and shape metrology, exist. Each challenge must be addressed to create the perfect pixel for imaging.

Several unique metrology and inspection steps are needed for each layer. Layer 1 of the CIS is the pixel layer, layer 2 is the CMOS layer which is usually hybrid bonded to the pixel layer, and layer 3 features the microlens array which is either bonded or manufactured directly on the pixel wafer.

SiC and GaN Power Devices

In recent years SiC has seen a tremendous surge in popularity given its use for switching high voltages in EV motors. Today, SiC is in production at 200mm. However, some manufacturers have started to produce 300mm SiC wafers for applications that take advantage of the material’s unique thermal conductivity.

Inspection and metrology play key roles in the production of SiC power devices. First of all, inspection is used to identify latent or killer crystalline defects in the initial substrate and subsequent epitaxial SiC layers. Then, regular particle defect inspection and all-surface inspection is performed to catch additional defects on the edge or backside of the wafers. Multiple particle defect inspections are carried out throughout the remainder of the high-volume manufacturing process.

Now on to metrology. The ability to conduct accurate measurements plays a key role in understanding the thickness, dopant concentration, and uniformity of the epitaxial layers grown on the initial substrate. The importance of metrology does not end there. Across the remainder of the process flow, CD metrology and trench shape metrology play a part in ensuring device yield and performance.

Unlike SiC, GaN technologies migrated to 300mm wafers earlier. For GaN power devices, the challenge involves identifying crystalline defects, surface roughness metrology, AlGaN layer homogeneity, and CD metrology.

The key need for original equipment manufacturers supporting customers in this segment is the ability to deliver inspection and metrology technologies that can handle these materials and associated wafer thicknesses, while also performing necessary inspection or metrology steps at cost-of-ownership price points for power devices. After all, these technologies need to offer manufacturers economically viable alternatives to ever-improving silicon power devices.

Photonics and Co-Packaged Optics

Photonics technologies have been around for decades, but they have evolved with the times. Now you can find photonics in a growing number of applications, including laser printing and xerography, facial recognition, and several telecommunication-based applications. Today, however, we’re seeing a new demand driver for this technology: AI.

Current AI devices communicate via standard metal traces. However, the increasing bandwidth requirements of AI chipsets and the power dissipation incurred from the use of copper wiring within data centers have combined to create a demand for a high-speed technology that consumes little power: silicon photonics.

Figure 1: Left to right, examples of V-grooves, basic waveguide geometry, and refractive and diffractive micro-lens structures. Each physical device brings with it its own set of key inspection and metrology requirements.

Silicon photonics replace these metal traces with optical waveguides that use light, not electrons, to carry information. In the case of co-packaged optics (CPO) which are directly integrated with chips, photonics provides ultra-high-bandwidth, low-power, light-based data transmissions. CPO combines laser sources with waveguide-based silicon chips, micro-lenses, and opto-electronic converters in a package that fits neatly on the modern panel level AI package. This one small module represents the cumulative challenges of multiple manufacturing segments under the umbrella of photonics and optics. Addressing these challenges requires V-groove inspection and metrology, waveguide metrology, micro-lens inspection and metrology, and a number of other areas including dopant uniformity (Figure 1). In the end, the ultimate success of any given CPO module relies on the assembly of all the die that go into it.

Conclusion

Whether the subject is MEMS structures demanding near perfect sidewalls, CIS stacks spanning multiple bonded layers, power devices pushing wide-bandgap materials to their limits, or photonics and co‑packaged optics, the common challenge manufacturers face is control. Across every specialty device category, success hinges on advanced metrology and inspection solutions that can precisely measure what matters most—shape, thickness, uniformity, defects, and material properties—at high throughput and low cost.

In the final blog of this three-part series, we’ll shift our attention from the challenges facing specialty devices to their solutions. We hope you will join us to learn more about these unsung heroes of modern life.

Christopher Haire is a marketing content specialist at Onto Innovation and a former business journalist.

You Have a Challenge? Let’s talk.

We’d love to connect with you.

Looking to learn more about our innovative solutions and capabilities? Our team of experts is ready to assist you. Reach out today and let’s starts a conversation about how we can help you achieve your goals.

Let’s Talk

"*" indicates required fields

Specialty devices are the unsung heroes of modern life. For many in the semiconductor industry today, the spotlight is on the SiC and GaN power devices used in automotive, green energy, fast-charge consumer electronics (CE), and high-performance computing (HPC) applications (Figures 1 and 2).

However, specialty devices are more than just power devices. They are a broad class of semiconductor components delivering a variety of functions across multiple industry segments, including microelectromechanical systems (MEMS) in automobiles and CE to radio frequency (RF) filters for 5G/6G communications.

Figure1: Planar SiC MOSFET and trench SiC MOSFET

Figure 2: Vertical GaN and GaN on Si high electron mobility transistor (HEMT)

Photonics are another type of specialty device making industry waves. Previously viewed as something of a dark horse in the specialty sphere, photonics have made a comeback. Today, photonics are being used for 3D sensing in multiple CE applications such as smartphone user verification and 3D imaging, automotive applications in which scanning lasers are used in advanced driver assistance systems (ADAS); and telecommunications applications where photonics have long been used as optical transceivers supporting the conversion of copper wiring to optical fiber communications in data centers. While these applications and others exist for photonics, the killer photonics application is shaping up to be co-packaged optics (CPO), which enables optical communications directly from packaged XPU devices supporting AI applications.

When it comes to the broad category of specialty devices, nearly all of them are either manufactured on or previously manufactured on 150mm or 200mm wafers. However, specialty devices are moving to larger wafer sizes, either 200mm or 300mm depending on device type.

With many specialty devices scaling to larger wafer sizes, the semiconductor industry faces new challenges in process control. After all, specialty devices are known for delivering specialized features or capabilities based on a unique process step or material that often requires a customized inspection and metrology solution.

To optimize the manufacturing process, real-time process control —powered by data analytics and software —has become an indispensable requirement in specialty device fabrication. To scale specialty technologies for high-volume manufacturing, manufacturers need integrated solutions and specialty-focused platforms offering flexibility, precision, and automation across multiple wafer sizes.

In this three-part blog series, we will begin by discussing one of the most important trends in specialty devices, the transition to larger wafer sizes and what this means for specific devices. The following blogs will focus on the challenges facing the manufacturing of specialty devices and the solutions addressing these challenges.

From Niche Applications to Mainstream Products

Originally, specialty devices were referred to as More-than-Moore devices because the use of these devices went beyond the simple node scaling of traditional CMOS devices. They also were being enabled by one or more “specialty” materials or process steps. In fact, the semiconductor industry did not start using the term “specialty devices” until these devices transitioned from niche applications to mainstream products and high-volume production.

But what exactly are the benefits of these specialty materials? Let’s consider the case of specialty power devices.

The compound semiconductors used in power specialty devices, SiC and GaN, can handle high voltages more efficiently than traditional Si. They provide the ability to switch high voltages in increasingly smaller areas and at higher speeds than their silicon-based counterparts. They do this without the elaborate cooling that would otherwise be required. As these materials scale to larger wafer sizes, they necessitate upgrades in wafer fabrication equipment, inspection, and metrology tools.

Transitioning Wafer Sizes

Specialty devices have long been the domain of 150mm and 200mm wafers, with CMOS image sensors (CIS) and power management devices being the exception. That’s all changing.

On the compound semiconductor front, the high demand for GaN-based high-power, fast-switching technologies is motivating a wafer size transition from 200mm to 300mm while SiC power devices are transitioning from 150mm to 200mm in high-volume manufacturing. In addition, photonics technologies now span wafer sizes of 150mm to 300mm, and MEMS devices are offered at 300mm.

As more specialty device technologies move into high-volume manufacturing, we can expect to see a greater demand for 300mm silicon, glass, and compound semiconductor-based wafer applications. However, this transition to larger wafer sizes introduces unique challenges for each specialty device type. These devices and their challenges include:

MEMS: Larger substrates and diverse materials (Si, glass, thick metals) require tighter process control of etch depth and CD, feature height across bowed or warped wafers, and surface roughness.

SiC Power: As SiC power moves to 200mm, inspection and metrology must expand sampling, deal with increased crystal defects, and support device architecture transitions profiling complex trench MOSFETs (e.g., top/bottom CD) without killing throughput. Insufficient sampling on larger wafers creates blind spots that hurt yield and cost of ownership.

GaN Power: Moving GaN to 300mm wafers increases within-wafer variation, requiring more measurement points and precise control of trench/HEMT critical dimensions to maintain uniform performance, while dealing with increasingly brittle and bowed or warped materials.

Photonics and CPO: Co-packaged optics require metrology tools designed for die-to-die and wafer bonding (voids, Cu dishing, film variation), sub‑micron alignment, and warpage, which affects optical coupling on larger wafers and multi‑die assemblies.

CIS: CIS stacks drive high sampling density for overlay and CD uniformity. These stacks require integrated chemical mechanical planarization metrology and analytical tools to catch excursions quickly as die counts and wafer diameters increase.

To meet these challenges and others, manufacturers need upgraded metrology and inspection tools, many of which will come from suppliers with a long-standing relationship with specialty technologies. These suppliers are best prepared to meet the wafer handling, on-wafer materials, and cost-of-ownership requirements that have become synonymous with the specialty device markets.

Conclusion

The emergence of specialty devices as a vibrant market of their own is driven by the increasing complexity and functionality of end products. Even in mature markets, such as smartphone and automotive, manufacturers continue to innovate by adding new features, which, in turn, fuels demand for additional specialty devices.

In our next blog, we will further explore the many challenges facing each type of specialty device. We hope you join us as we discuss those challenges and, in part three of our series, the solutions that are available in the specialty space today.

Christopher Haire is a marketing content specialist at Onto Innovation and a former business journalist.

You Have a Challenge? Let’s talk.

We’d love to connect with you.

Looking to learn more about our innovative solutions and capabilities? Our team of experts is ready to assist you. Reach out today and let’s starts a conversation about how we can help you achieve your goals.

Let’s Talk

"*" indicates required fields

If you’ve been following the evolution of advanced packaging, you know that the industry is pushing boundaries like never before. From high-performance computing to industry-upending AI devices, the demand for smaller, faster, and more powerful chips is driving innovation at every level. One of the unsung heroes in this transformation: Glass carriers.

These carriers are becoming essential for applications involving high-bandwidth memory (HBM), 2.5D/3D integration, and chiplet architectures. During the manufacturing process, glass carriers serve as mechanical support for thin wafers and panel-level packages. Why? Glass carriers are noted for their warpage resistance, superior rigidity, and thermal stability. This combination of glass’ exceptional flatness and rigidity enables the precise placement of dies and interposers. Additionally, glass is optically transparent, which allows through-glass alignment during bonding and stacking, a critical capability for 3D integration where multiple layers must be accurately registered.

The benefits of glass carriers, however, come with several challenges, none of which should come as a surprise to anyone who has ever handled glass, whether in the fab or at home. Glass is fragile and, as such, is prone to surface defects, subsurface inclusions, and residual stress. Each of these can negatively impact die attachment quality, interconnect reliability, and die yield.

Let’s take a look at three major yield-killing culprits.

Surface defects such as particles, pits, and scratches are among the most common issues and may occur during glass carrier handling and processing, compromising the structural integrity and performance of advanced packaging assemblies (Figure 1). Particles can interfere with the bonding process, leading to poor adhesion or electrical discontinuities, while pits and scratches can propagate stress points that weaken the carrier during thermal cycling or molding.

However, subsurface inclusions and organic contamination, which are often introduced during reclaim or cleaning, pose more critical challenges. Inclusions within the glass can create localized stress concentrations, while organic residues can reduce UV transmission and cause bonding failures. These contaminants are particularly problematic in high-density interconnect environments where optical clarity and surface purity are critical.

Figure 1: Common glass carrier defects

In addition to surface and subsurface defects, residual stress represents a concern. Over time, these stress points, manifesting during thermal processing or mechanical handling, can lead to cracks or delamination, undermining the thermo-mechanical integrity of the entire package.

These potential challenges are compounded each time a glass carrier is reused in an effort to reduce overall packaging costs. Fortunately, technologies have been developed to address this obstacle. These technologies integrate AI-driven defect classification, real-time analytics, and adaptive scanning modes to maintain throughput without sacrificing accuracy, enabling manufacturers to detect surface anomalies, subsurface inclusions, and stress-induced defects with unprecedented precision.

Enabling Defect-Free Glass Carriers

Today’s wafer-based inspection platforms utilize laser scatterometry and imaging techniques to inspect for nanometer sized defects on a variety of opaque and transparent/semi-transparent substrates. These substrates may be suitable for either R&D or high-volume advanced IC substrate (AICS) and fan-out panel level processing (FOPLP) environments. Proprietary inspection technology with multiple detection channels and advanced signal processing algorithms is applied to achieve accuracy and reliability in glass carrier inspection.

Figure 2: Results of top (blue) and bottom (red) defect mapping.

With each channel optimized to capture unique scattering and reflection signatures, the technology differentiates between surface and subsurface defects, as well as stress-related anomalies, with remarkable accuracy. Surface particles, scratches, pits, bumps, surface contamination, film or bulk wafer stress, voids/inclusions can be detected, measured, characterized, and imaged. One of the most significant capabilities of this technology is the ability to conduct simultaneous top, bottom, and internal defect mapping, a critical need for transparent and semi-transparent substrates where defects can occur across multiple planes (Figure 2).

Beyond defect detection, Angstrom-level film thickness measurement provides precise control over surface coatings and residual layers. This capability is particularly valuable in the glass reclaim process where even minor variations in film thickness can impact UV transmission and bonding performance. By enabling accurate defect detection and grading, only glass carriers meeting stringent quality standards are returned to production.

By introducing technologies that mitigate risks by providing comprehensive defect mapping and stress analysis, manufacturers are able to maintain the mechanical and thermal integrity required for next-generation devices. This capability is especially valuable in markets such as AI devices, high-performance computing, and automotive electronics where reliability is non-negotiable. With this combination of advanced optical technology and robust algorithmic analysis, manufacturers can successfully achieve higher yields, lower costs, and greater confidence in their packaging processes.

Conclusion

As packaging complexity grows and the use of glass carriers increases, inspection systems that combine multi-depth defect mapping and stress analysis will become indispensable for ensuring yield and reliability in AI and HPC devices. With the explosive growth in AI-driven data centers and advanced packaging architectures, manufacturers need solutions that combine accuracy, speed, and cost efficiency. The laser-based wafer inspection technology discussed in this blog meets several glass carrier challenges head-on while enabling advanced packaging houses to maintain defect-free glass carriers in support of next-generation advanced packaging.

The future of glass carriers is clear: with the right technologies at the ready, manufacturers have the tools and the means to meet the growing needs of the AI and HPC markets.

Biography

Jason Lin is Director of Product Marketing at Onto Innovation.

You Have a Challenge? Let’s talk.

We’d love to connect with you.

Looking to learn more about our innovative solutions and capabilities? Our team of experts is ready to assist you. Reach out today and let’s starts a conversation about how we can help you achieve your goals.

Let’s Talk

"*" indicates required fields

AI workloads are pushing the boundaries of compute, memory, and interconnect architectures, and to meet these goals, manufacturers are rapidly accelerating advanced logic and DRAM development. Chief among these innovations: gate-all-around (GAA) logic transistor and vertical gate (VG) DRAM, two device architectures that promise higher performance, improved power efficiency, and greater scalability.

However, the arrival of these device architectures introduces new levels of manufacturing complexity brought on by increasing high aspect ratios (HAR) and the continued shrinking of device dimensions by roughly 20–30% per generation. Fortunately, new capabilities in optical critical dimension (OCD) metrology are up to the challenge of measuring and monitoring features, such as gate profiles, film thickness, and structural uniformity, at the nanometer scale. With the right tools on hand, manufacturers can maintain high volume process control for GAA logic and VG DRAM in the AI era (Figure 1).

Figure 1: GAA (left) and vertical gate DRAM (right) present new challenges for process control (pictured above)

These new capabilities are enabling the delivery of accurate, high throughput measurements across complex 3D geometries like GAA and VG DRAM by offering smaller spot size, higher signal sensitivity, and enhanced precision.

But before we discuss these new capabilities, let’s dive deeper into the challenges of both device architectures. We’ll start with GAA.

Challenges and Solutions

GAA transistors feature HAR channels and stacks of multi-layered nanosheets, in some cases as thin as 10nm. The manufacturing complexity lies in controlling individual nanowires and accurately characterizing buried nanowires within three-dimensional architectures. Precise control over nanowire dimensions is critical for achieving the desired electrical performance, including higher transistor speeds and lower power consumption. As such, GAA manufacturers need metrology solutions capable of extracting unique signals with high sensitivity and precision from each layer, even those deeply buried under semi-opaque stacks.

While GAA logic presents unique challenges in transistor scaling, VG DRAM introduces equally complex demands in memory architecture. VG DRAM involves vertical nanowire etch, multi-layer ultra-thin film deposition, sidewall trim, and buried bitlines, electrical connections that are embedded deep within the silicon substrate rather than being placed on the surface, helping reduce the footprint of each memory cell. Additionally, VG DRAM for HBM4 features smaller cell blocks. Addressing these challenges requires an OCD system with a significantly smaller spot size in order to conduct direct on-device measurements. This, unfortunately, reduces the signal strength of optical systems.

For both GAA logic and VG DRAM devices, incorporating real-time stabilization technologies and advanced optical modeling can improve measurement precision and enable tighter process windows. In addition, AI-guided analysis allows fabs to create robust measurement recipes faster and with greater accuracy for highly complex device structures like nanosheets and VG DRAM cells. Analysis software also enables more accurate measurements that are scalable across multiple tools, while supporting smart manufacturing and predictive analytics for advanced AI applications.

Many of today’s OCD metrology tools, however, have limited SNR to measure these increasingly smaller changes in the complex device structure, while other systems do not have sufficient information to separate signals from multiple parameters (e.g., dimensions from individual nanowires) in complex device structures. Furthermore, most OCD metrology systems feature large spot sizes that do not fit into the reduced DRAM cell block area for HBM4.

Without the proper advanced metrology solutions addressing these challenges, manufacturers risk variability in critical dimensions and compromising device performance and yield. As such, more data channels and enhanced SNR at higher speed are needed to overcome the reduced signal strength due to smaller geometries and increased signal complexity from higher nanowire stacks.

Onto Innovation has recently added a number of optical and algorithm innovations to its OCD arsenal, such as new multi-polarization angle data channels with real-time stabilization technologies to enhance information content and SNR. These new capabilities offer advanced optical modeling, improving measurement precision, and robustness. Furthermore, the addition of AI-guided analysis software allows fabs to create robust measurement recipes faster and with greater accuracy for highly complex device structures. This includes nanosheets and VG DRAM cells. The use of analytical software also enables more accurate measurements and is scalable across multiple tools, all in support of smart manufacturing and predictive analytics for advanced AI applications.

Conclusion

As the semiconductor industry advances toward AI-optimized architectures, the need for more precise and adaptive process control has become critical. The new OCD capabilities discussed in this blog enable tighter control over individual nanowires in GAA logic and allow for more accurate measurements within increasingly compact VG DRAM cell blocks. Combined, these innovations represent a foundational shift in how the industry measures, monitors, and optimizes the most advanced semiconductor technologies.

Jiangtao Hu is a product marketing senior director at Onto Innovation.

You Have a Challenge? Let’s talk.

We’d love to connect with you.

Looking to learn more about our innovative solutions and capabilities? Our team of experts is ready to assist you. Reach out today and let’s starts a conversation about how we can help you achieve your goals.

Let’s Talk

"*" indicates required fields

The demand for high bandwidth memory (HBM) is accelerating across the semiconductor industry, driven by boundary-pushing artificial intelligence, high-performance computing, and advanced graphics. These technologies require access to vast datasets, which in turn increases the need for memory solutions that combine speed, density, and power efficiency. HBM meets these demands by vertically stacking memory dies and linking them with ultra-fast interconnects.

With data rates rising, the need for increased output contact pad density is needed. To address this, bump technologies are being pushed beyond what was previously thought to be their physical and performance limits. As it stands today, some memory designers are innovating their way to bump sizes below 10µm in high volume manufacturing. Be that as may, scaling bump height to 2μm will be considerably challenging, leading some to explore hybrid bonding as an interconnect solution.

Hybrid bonding enables finer interconnect pitches of less than 10μm, allowing for more I/O terminals in a smaller area. This increased density translates directly into higher bandwidth and improved overall performance.

Traditional bump-based stacking introduces gaps of about 30μm between dies, while hybrid bonding offers direct Cu-to-Cu connections. By achieving near-zero spacing between dies, hybrid bonding significantly reduces overall package thickness and offers lower resistance and better thermal conductivity than bump-based methods. The result: improved signal integrity, reduced power consumption, and enhanced heat dissipation, each of which are critical for HBM.

Finally, hybrid bonding supports several configurations: wafer-to-wafer, die-to-wafer, and die-to-die, offering flexibility in manufacturing and integration. This adaptability is vital for scaling HBM technologies across different applications and performance tiers.

Of course, hybrid bonding offers manufacturers its own set of challenges—increased sensitivity to particles and organic residues, lower yields and introduction of more costly process and process control steps—making the decision to stick to the tried-and-true microbump technology over the emerging, innovation a debate worth having.

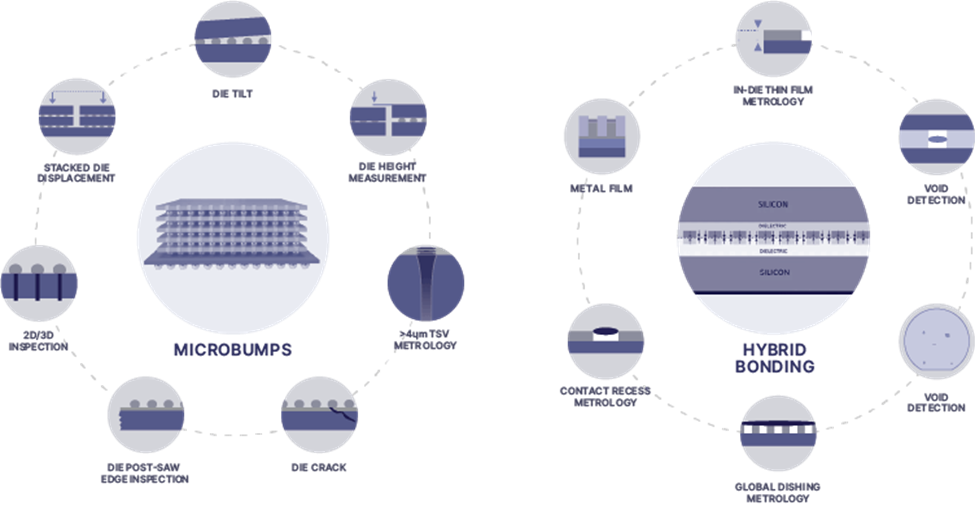

In this two-part blog series, we will compare these two interconnect solutions and discuss the challenges they face (Figure 1).

Figure 1: Interconnect challenges in microbump and hybrid bonding technologies.

Head to Head: Microbumps and Hybrid Bonding

Bumps have several advantages over hybrid bonding. As a mature technology, they are widely used and well-understood in the industry. They are also compatible with existing flip-chip and underfill processes, and are scalable for moderate density. In addition, the cost of bump technologies is lower than hybrid bonding technologies.

However, microbumps have a number of disadvantages compared to hybrid bonding. The most significant of which are the pitch limitations of bump technologies. Microbumps struggle at pitches below 10µm due to challenges in plating uniformity and solder reflow. Bumps also require underfill, which can introduce stress and complicate thermal management.

Limitations aside, bump technology continues to evolve. Leading suppliers of bump plating systems project a continued downscaling of bump dimensions, with diameters decreasing to the 5µm to 4µm range and heights dropping as low as 2µm to 1µm. At a 10µm pitch, the lateral footprint remains sufficient to support the high-density I/O requirements of advanced memory architectures. Concurrently, the reduced bump height enables vertical integration of up to 16 stacked HBM dies within the 775µm maximum package height defined by Joint Electron Device Engineering Council (JEDEC) standards. Consequently, bump interconnects remain a viable and scalable solution for next-generation HBM, and major manufacturers are maintaining substantial R&D investments in bump technology.

Hybrid bonding, while promising, presents its own set of challenges. Chief among them, hybrid bonding is especially sensitive to particles and organic residues; for example, even 1µm particles can cause defects. These residues can prevent proper contact, trap gases or moisture, and lead to void formation during bonding. Voids can cause delamination or incomplete bonding, reducing mechanical integrity and electrical continuity. Hybrid bonding also is more expensive than traditional bump technologies and may require manufacturers to purchase new equipment and adopt new process flows before moving away from bump technology.

Conclusion

As demand for HBM intensifies, the industry stands at a crossroads in interconnect technology. While microbumps have evolved to support increasingly dense and tall HBM stacks, they face several obstacles. Hybrid bonding offers a compelling alternative, but not without its own hurdles. Ultimately, both technologies are advancing in parallel, each with unique strengths and limitations.

Regardless of which interconnect technology is chosen, a comprehensive suite of interconnect solutions will be needed to address the obstacles manufacturers of HBM devices face. In the second in this two-part series, we will explore a number of these solutions that, when combined, tackle the biggest challenges facing interconnects in HBM.

Biography

Damon Tsai joined Onto Innovation in 2018 and has extensive experience in inspection and metrology, with a specialized focus on semiconductor FEOL, advanced packaging, OSAT, and specialty markets like RF, Power, and CIS. He currently serves as the Senior Director of Product Marketing, Inspection.

Woo Young Han joined Onto Innovation in 2000 and is currently Product Marketing Director, Inspection. He holds an Electrical Engineering degree from University of Toronto.

Tim Kryman is Product Marketing Senior Director, Metrology and Inspection. Tim has been with Onto Innovation for more than 20 years and holds a BS in Accounting and Information Systems from Lock Haven University and an MBA from DeSales University.

You Have a Challenge? Let’s talk.

We’d love to connect with you.

Looking to learn more about our innovative solutions and capabilities? Our team of experts is ready to assist you. Reach out today and let’s starts a conversation about how we can help you achieve your goals.

Let’s Talk

"*" indicates required fields