Semiconductor manufacturing creates a wealth of data – from materials, products, factory subsystems and equipment. But how do we best utilize that information to optimize processes and reach the goal of zero defect manufacturing?

This is a topic we first explored in our previous blog, “Achieving Zero Defect Manufacturing Part 1: Detect & Classify.” In it, we examined real-time defect classification at the defect, die and wafer level. In this blog, the second in our three-part series, we will discuss how to use root cause analysis to determine the source of defects. For starters, we will address the software tools needed to properly conduct root cause analysis for a faster understanding of visual, non-visual and latent defect sources.

Whether the discussion is about smart manufacturing or digital transformation, one of the biggest conversations in the semiconductor industry today centers on the tremendous amount of data fabs collect and how they utilize that data.

While chip makers are accumulating petabytes of data across the entire semiconductor process, a question arises: how much of that information is being fully utilized? The answer may be around 20%, according to the Semiconductor Engineering article “Too Much Fab and Test Data, Low Utilization.” Unfortunately, this poses a challenge because fab end customers are demanding highly reliable chips, in other words, chips with zero escaping defects and which offer manufacturers clear genealogy and traceability.

Many of you reading this work for companies that have started or are planning digital transformations. To do this, these companies will need to better integrate the data they collect — and that includes data from materials, products, processes, factory subsystems and equipment.

For smart manufacturing to truly live up to its potential, manufacturers will need inline automation that takes complete advantage of the analytics their monitoring systems generate, analytics which can be fed back to the process tools, manufacturing execution systems and other factory systems in real time. Working in concert, these integrated systems are essential to creating a zero defect manufacturing environment.

In the world of smart manufacturing, manufacturers will be tasked with providing timely total solutions to detect and classify defects using inspection and metrology tools, conduct root cause analysis to determine the source of said defects and, finally, employ process control and equipment monitoring using run-to-run and fault detection and classification software solutions to prevent defects from reoccurring.

In this blog, the first in our three-part series “Achieving Zero Defect Manufacturing,” we will focus on detecting and classifying defects. We will start by looking at solutions at the defect level before moving on to the die level and the wafer level.

Automated optical inspection (AOI) is a cornerstone in semiconductor manufacturing, assembly and testing facilities, and as such, it plays a crucial role in yield management and process control. Traditionally, AOI generates millions of defect images, all of which are manually reviewed by operators. This process is not only time-consuming but error prone due to human involvement and fatigue, which can negatively impact the quality and reliability of the review.

In the Industry 4.0 era, the integration of a deep learning-based automatic defect classification (ADC) software solution marks a significant advancement in manufacturing automation. For one, ADC solutions reduce manual workload – meaning less chance of human error and higher accuracy – and, two, they are poised to lower the costs associated with high-volume manufacturing (HVM).

Deep learning, a branch of machine learning based on artificial neural networks, is at the core of these ADC solutions. It mimics the human brain’s ability to learn and make decisions; this enables the system to recognize complex patterns in data without explicit programming. Compared to traditional methods, this approach offers a significant leap in processing efficiency and accuracy.

All great voyages must come to an end. Such is the case with our series on the challenges facing the manufacturing of advanced IC substrates (AICS), the glue holding the heterogeneous integration ship together.

In our first blog, we examined how cumulative overlay drift from individual redistribution layers could significantly increase overall trace length, resulting in higher interconnect resistance, parasitic effects and poor performance for high-speed and high-frequency applications. To address this, layer to layer overlay performance data needs to be monitored at each layer. If the total overlay error exceeds specifications at any process step, and at any location on the panel, corrective action must be taken to mitigate the drift in total overlay.

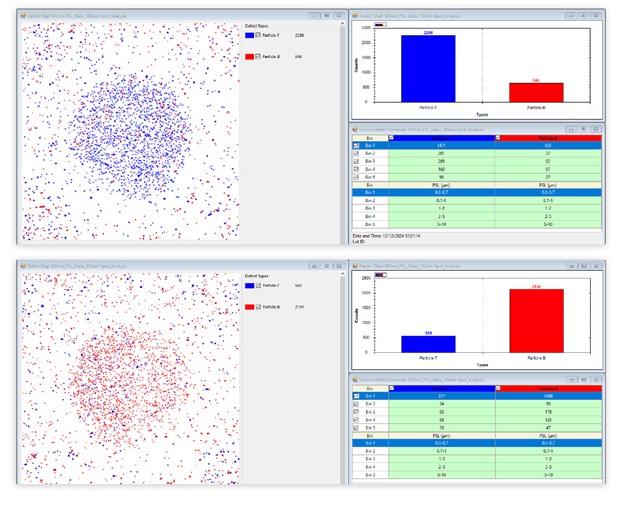

For this second installment, we explored the issue of AICS package yield and its importance in fostering a cost-effective, production-worthy process. Unlike most fan-out panel-level packaging (FOPLP) applications, AICS has relatively few packages per panel. This enormous disparity impacts yield calculations dramatically. In the AICS production process, the main challenge is the real-time tracking of yield for every panel, at every layer, throughout the fab. The solution: using advanced automatic defect classification (ADC) and yield analytics to quickly address errors.

In this final article of the series, we explore how overlay correction solutions compensate for panel distortion effects induced by copper clad laminate (CCL) processing, which impacts yield and final package performance.

Over the past ten years, primarily driven by a tremendous expansion in the availability of data and computing power, artificial intelligence (AI) and machine-learning (ML) technologies have found their way into many different areas and have changed our way of life and our ability to solve problems. Today, artificial intelligence and machine learning are being used to refine online search results, facilitate online shopping, customize advertising, tailor online news feeds and guide self-driving cars. The future that so many have dreamed of is just over the horizon, if not happening right now.

The term artificial intelligence was first introduced in the 1950s and used famously by Alan Turing. The noted mathematician and the creator of the so-called Turing Test believed that one day machines would be able to imitate human beings by doing intelligent things, whether those intelligent things meant playing chess or having a conversation. Machine learning is a subset of AI. Machine learning allows for the automation of learning based on an evaluation of past results against specified criteria. Deep learning (DL) is a subset of machine learning (FIGURE 1). With deep learning, a multi-layered learning hierarchy in which the output of each layer serves as the input for the next layer is employed.

Currently, the semiconductor manufacturing industry uses artificial intelligence and machine learning to take data and autonomously learn from that data. With the additional data, AI and ML can be used to quickly discover patterns and determine correlations in various applications, most notably those applications involving metrology and inspection, whether in the front-end of the manufacturing process or in the back-end. These applications may include AI-based spatial pattern recognition (SPR) systems for inline wafer monitoring [2], automatic defect classification (ADC) systems with machine-learning models and machine learning-based optical critical dimension (OCD) metrology systems [1][7].

As technology nodes shrink, end users are designing systems where each chip element is being targeted for a specific technology and manufacturing node. While designing chip functionality to address specific technology nodes optimizes a chip’s performance regarding that functionality, this performance comes at a cost: additional chips will need to be designed, developed, processed and assembled to make a complete system solution.

At back-end packaging houses in the past, a multi-chip module (MCM) placed various packaged chips on a printed circuit board. Today in the advanced packaging space, fabless companies are using an Ajinomoto build-up film (ABF) substrate as a method of combining various chips into a smaller form factor. As the push for increased density in smaller multi-chip module packages increases, process cost increases as well. Along with rising costs, the cycle times needed to process ABF substrates with ever more redistribution layers (RDL) also increases. Consequently, the need for back-end packaging houses to maintain process control and detect defects is going to be similar to what front-end fabs encountered in the 1990s.

Currently, substrates are 100µm to 150µm thick. As with front-end semiconductors, Moore’s law is going to come into play with advanced substrate packaging technology. Line width/interconnects are going to shrink, and the need to be able to control and detect feedback will grow.

Reticle exposure on a non-ridged substrate inherently will require better control for rotational, scaling, orthogonal and topology variation compensation. One solution is to use a feed-forward adaptive-shot technology to address process variations, die placement errors and dimensionally unstable materials. Such a solution uses a parallel die-placement measurement process, while advanced analytics provide a means to balance productivity against yield.

Displacement errors can be measured on a lithography tool, but the measurements are slow, typically taking as much time to conduct as the exposure. But moving the measurements to a separate automated inspection system and feeding those corrections to the lithography system can double throughput. In addition, yield software adds predictive yield analysis to the externally conducted measurement and correction procedures and increases the number of die included in the exposure field up to a user-specified yield threshold.