Automated optical inspection (AOI) is a cornerstone in semiconductor manufacturing, assembly and testing facilities, and as such, it plays a crucial role in yield management and process control. Traditionally, AOI generates millions of defect images, all of which are manually reviewed by operators. This process is not only time-consuming but error prone due to human involvement and fatigue, which can negatively impact the quality and reliability of the review.

In the Industry 4.0 era, the integration of a deep learning-based automatic defect classification (ADC) software solution marks a significant advancement in manufacturing automation. For one, ADC solutions reduce manual workload – meaning less chance of human error and higher accuracy – and, two, they are poised to lower the costs associated with high-volume manufacturing (HVM).

Deep learning, a branch of machine learning based on artificial neural networks, is at the core of these ADC solutions. It mimics the human brain’s ability to learn and make decisions; this enables the system to recognize complex patterns in data without explicit programming. Compared to traditional methods, this approach offers a significant leap in processing efficiency and accuracy.

Onto Innovation’s Monita Pau and Prasad Bachiraju contribute to the March 2024 edition of Semiconductor Digest.

Abstract

In traditional semiconductor packaging, manual defect review after automated optical inspection (AOI) is an arduous task for operators and engineers, involving review of both good and bad die. It is hard to avoid human errors when reviewing millions of defect images every day, and as a result, underkill or overkill of die can occur. Automatic defect classification (ADC) can reduce the number of defect images that need to be reviewed by operators. The ADC process can also be integrated with AOI engines to reduce nuisance defect images to reduce AOI image capturing time. This paper will focus on how to utilize Onto Innovation’s TrueADC software product to build ADC classifiers using a multi-engine (ME) solution. The software supports CNN, DNN and KNN algorithms. The use of CNN and DNN are currently mainstream in the development of deep learning (DL) for ADC classification in the semiconductor industry. We will address how to improve classification by using multiple models in the classification process with unique algorithms. As a result, the user can achieve industry requirements with very demanding specifications, like high accuracy, high purity, and high classification rate with very low escape rates.

With the continued advancement of environmental, social and governance goals, corporations are increasingly focused on reducing their carbon footprints. To accomplish this, these companies are being asked to operate their businesses more efficiently than ever before, whether the matter is reducing waste, water usage or power consumption. This is true for the semiconductor industry as well.

Although semiconductor manufacturing is not a smokestack industry, it is truly amazing just how many resources – from water to materials and electricity – goes into making chips. To better understand the carbon footprint and environmental impact a typical fab has, consider this: based on estimates in a 2021 article in The Guardian, a 1% improvement in a factory’s production capability could save that factory 450 tons of waste, 37 million gallons of fresh wafer and 22.5 million kilowatt-hours of electricity over the course of a year. That small 1% change is a substantial reduction in resources used, one that not only makes operations managers happy but ESG-minded stockholders as well.

No matter how you get your news, it seems like everyone is talking about AI – and it’s either going to usher in a new era of productivity or lead to the end of humankind itself. Regardless, the AI era is here, and it’s just beginning to have an impact on our lives, our jobs and our future.

To meet the rigorous demands of AI – along with high-performance compute, 5G and electric vehicles – the semiconductor industry is seeking out new innovations to increase speed, bandwidth and functional density, lower energy usage, cost and latency. At the top of the list: heterogeneous integration. And to make heterogeneous integration a reality, back-end packaging houses use advanced integrated circuit substrates (AICS).

In a previous blog, we focused on one of the major challenges of manufacturing AICS – total overlay drift. For this second installment in our three-part series on packaging solutions, we explore the issue of AICS package yield and its importance in fostering a cost-effective, production-worthy process.

Packaging is becoming more and more challenging and costly. Whether the reason is substrate shortages or the increased complexity of packages themselves, outsourced semiconductor assembly and test (OSAT) houses have to spend more money, more time and more resources on assembly and testing. As such, one of the more important challenges facing OSATs today is managing die that pass testing at the fab level but fail during the final package test.

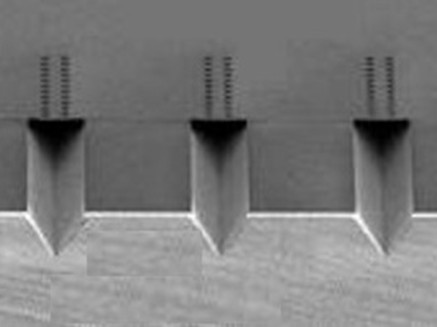

But first, let’s take a step back in the process and talk about the front-end. A semiconductor fab will produce hundreds of wafers per week, and these wafers are verified by product testing programs. The ones that pass are sent to an OSAT for packaging and final testing. Any units that fail at the final testing stage are discarded, and the money and time spent at the OSAT dicing, packaging and testing the failed units is wasted (figure 1).